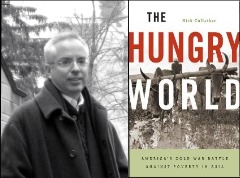

Dr. Nick Cullather writes on the history of development and nation- building. His most recent book, The Hungry World: America’s Cold War Battle against Poverty in Asia, was just published by Harvard University Press. He is the author of two other books, Secret History, a study of the CIA’s overthrow of Guatemala’s government, and Illusions of Influence, on cliental politics in the Philippines. He is associate professor of history at Indiana University.

Dr. Nick Cullather writes on the history of development and nation- building. His most recent book, The Hungry World: America’s Cold War Battle against Poverty in Asia, was just published by Harvard University Press. He is the author of two other books, Secret History, a study of the CIA’s overthrow of Guatemala’s government, and Illusions of Influence, on cliental politics in the Philippines. He is associate professor of history at Indiana University.

_____

[FPA Q1.] Many see the “green revolution” that occurred in Asia during the 1960s as proof that the science from the developed world could overcome near catastrophe in the developing world, particularly Asia. In your book, you offer a different explanation of the “green revolution.” Could you explain?

[NC] After years of drought and scarcity, the spectacular harvest in 1968 turned the food situation around. No one was more dismayed by the media “fairy tales” about this achievement than the scientists and aid officials who engineered it. The legend has two components: firstly, that Asia was in the grips of a true Malthusian crisis in the 1960s, a chronic scarcity resulting from overpopulation and backward agriculture, and secondly that technology–dwarf strains of wheat and rice–saved the day. Neither proposition holds up. Asian countries dramatically increased agricultural production in the 1950s and early ‘60s, before the green revolution, but the growth was in non-food crops. Millions of tons of food aid from the United States financed the construction of steel mills and factories and kept wages in the growing Asian industrial cities low. It also allowed India and other countries to shift from food to cotton, jute, oils, and other crops that brought in the dollars needed for capitalization. So the food deficit that became unsustainable in the 1960s was not a Malthusian disaster, it was a policy.

What turned this situation around was a policy change. Before 1966, industrial planners squeezed the capital they needed from the peasantry. The result was rural insurgency and more reliance on U.S. and multilateral aid. But then Asian governments reversed course; they controlled prices, subsidized fertilizer and water, and shifted investment and income from the cities to the country. The green revolution amounted to a government capture of agriculture similar to what happened in the United States in the New Deal. Dwarf wheat and rice was crucial to this turnaround, not because of their effects on yield, which were modest, but because of their effects on government and multilateral officials. Norman Borlaug called them a “catalyst” for changing attitudes at the top. The World Bank, AID, and nationalist regimes had all been committed to an industry-first development strategy, but the new technologies, plus a “world food crisis” rustled up by Lyndon Johnson, supplied a pretext for a new consensus around “basic needs.” When farmers could make money from growing grain, they did, and within a year India and Pakistan had food surpluses. Amid the excitement about impending famines and miracle seeds the crucial policy lessons got lost.

[FPA Q2.] Concerning Afghanistan, you write about how the U.S. had previously created development projects in the 1960s and 1970s to help Afghans produce food as a way of staying out of poverty. What were these projects?

[NC] At the end of World War II, Afghanistan was richer than Britain in terms of exchange reserves. It exported a valuable commodity, Persian lamb, which went into the glossy black fur coats favored by Doris Day. A single pelt could fetch $5000 on the New York market. Pashtun nomads grazed their herds in the Hindu Kush in summer and in the Waziri valleys across the Pakistan border in winter. But while the trade in karacul sheep was lucrative, it could not be controlled or taxed by the Afghan government, so the king, Zahir Shah, and US aid officials came up with a plan to settle the Pashtuns in the deserts west of Kandahar. The plan relied on a vast irrigation and land reclamation scheme along the Helmand River. American engineers built two large dams, the Kajaki and Arghandab, laid out plots, and built whole communities. In the 1950s, the area around Lashkar Gah, Girishk, and Marja was known as “little America.” The Truman and Eisenhower administration saw it as an economic buffer against Soviet aggression.

Nation-building is not always good for farmers. To be a modern state, Afghanistan had to control its population and its economy, so it began a long and unsuccessful struggle to turn the Pashtuns into wheat farmers. To some extent, that’s the war we’re still fighting. “Agribusiness development teams” are embedded with the troops, but their predecessors’ attempt at nation-building is largely forgotten. When the Marines moved into Marja earlier this year, only one report mentioned that the concrete trenches the Taliban were firing from had been built by Americans a half century ago.

[FPA Q3.] Since 2001, the U.S. has been fighting in Afghanistan to prevent it from being a safe-haven for terrorists. Development projects have been launched to complement the military campaign. Could they be successful in stemming poverty in Afghanistan and allowing it to develop?

[NC] The winning hearts and minds (WHAM) strategy is designed mainly to mollify the Afghan population and to produce intelligence. But you are asking a bigger question. For over a century Americans have nurtured the hope that humanitarian aid could be a substitute for war. It’s a bipartisan dream; Woodrow Wilson and Herbert Hoover planted the idea that if the people of war-torn lands could have food, schools, and jobs they would lose their appetite for revolution. Truman’s Point IV took aim at the root causes of war, and both Eisenhower and Kennedy were convinced development could ensure that “societies which threaten ours will not evolve.” The record has been less clear. AID reported in 1975 that while the aid effort in South Vietnam had been overwhelmingly successful, the war, unfortunately, had not. And the 9/11 report observed that the terrorists came from relatively affluent countries that received substantial trade and aid from the United States. But the faith that aid projects will lead to transformative growth and reconciliation–what we might call the three-cups-of-tea doctrine–remains steadfast.

There are inspiring development success stories–the Marshall Plan, Korea, Taiwan, Israel–cases where the United States helped create thriving nations that were also strong allies. But in each of those cases war served as an impetus to growth rather than an impediment, and in each case “development” meant all-out industrialization guided by a strong state. Nothing like that is contemplated for Afghanistan, and nothing like it has ever happened there before. When it comes to economic growth or social change, history matters. If General Petraeus’s counter-insurgency strategy does produce a durable state and stem poverty and war in Afghanistan, it will be a first.

[FPA Q4.] Bono, Bill Gates, and President Obama are calling for a “second green revolution” for Africa. Can Africa repeat in the next decade what Asia achieved in the 1960s?

[NC] In some ways sub-Saharan Africa is similar to South Asia in the 1950s. It is a major exporter, sending corn, seafood, fruit, and vegetables to European markets, in addition to non-food crops, cotton, flax, oilseeds, and cut flowers. Its few industries are primary and extractive. Hunger is a problem not because Africans don’t grow enough food but because they can’t afford it. There are significant differences, too. Asia had a number of strong centralized governments with a single-minded focus on manufacturing. Nehru, Jiang, and Suharto imagined they could achieve self-sufficiency in a single generation. The Millennium Development Goals (halving the rate of hunger by 2015) are vastly less ambitious than Kennedy’s plan for all of free Asia to reach self-sustaining growth by the end of the decade. The funding commitments are correspondingly less. But the real difference is that “development” today means something very different from what it meant in the 1960s.

Development used to mean industrialization and central direction of the economy by the government. Since the 1990s the donor community has urged African governments to give up on their industrial ambitions and reduce interventions in the ag sector. Price controls, subsidies, and fertilizer supports have all been scaled back. The Gates and Rockefeller foundations and the Lugar-Casey Act are calling for a policy mix the reverse of the one Asia implemented in the 1960s. They mandate deregulation, production geared for export, and the abandonment of food self-sufficiency as a goal. One similarity is that, as in the original green revolution, genetic technology serves as a cover for sweeping policy changes that would be difficult to justify without the promise of a breakthrough.

There are reasons for concern here. In agriculture, higher productivity has never assured higher incomes or economic success. It more often leads to falling crop prices, poor farmers, and unemployment. It was this “paradox of plenty” that led the United States and Europe to manage production with subsidies and set-asides. The donor countries are pushing Africa down a different path. Africa is already substantially more urbanized than India was in the 1950s, and one foreseeable effect of agricultural modernization is an exodus of farm workers to the cities. In Asia there were nascent industries waiting, and the refugees became part of the cheap labor force that allowed those countries to capture world markets in textiles and plastics. Proponents of the green revolution 2.0 anticipate that displaced workers will “head to the cities” and find “local wage jobs” but they don’t indicate where those jobs will come from.

A lot of the development debate has focused on speculative possibilities–biotechnology, ICT4D, and the “Brazilian model”–rather than on Africa’s unusual position as an industry-free zone within the world economy. Development in the 1960s was grounded in a dynamic tension between agriculture and manufacturing that purportedly drove growth. The Obama administration has announced an eggs-in-one-basket approach. It expects African agriculture to overcome hunger, generate exports, and be the “primary means of driving economic growth and reducing poverty.” That strategy does have a precedent. It’s a continuation of the pattern colonial and post-colonial regimes in Africa have followed for 150 years. The second green revolution won’t be a repetition of the first, and it won’t even be a revolution.

[FPA Q5.] Americans have been trying for over a century now to eliminate world hunger. What lessons does that history hold for aid today and in the future?

[NC] There are three characteristics of American hunger policy that have remained remarkably constant. Each bears some reflection. The first is a determination to separate the causes of hunger from its context. The invention of the calorie as a practical unit of food measurement in 1896 began a turn toward identifying the “global food supply” as a purely technical problem isolated from from the wars, environmental changes, social revolutions, booms and busts going on in the twentieth century. After World War I, wheat served as a universal food that the United States could send into any emergency. The Rockefeller Foundation looked for (and found) a generic model of agricultural efficiency that could be transplanted to different continents. IR-8, the dwarf rice, was praised as a genetic “model T” because it could grow anywhere. Amartya Sen has pointed out that hunger has very little to do with overpopulation, food supply, or technology, but everything to do with the consumer’s entitlement to food. Entitlement is a political and economic problem that resists generic remedies. It is profoundly local and contextual.

Secondly, while the causes are narrowly technical, we expect solutions to have sweeping political ramifications. Americans have always believed that the power to alleviate hunger would confer an almost godlike power to win allies, stop wars, and change mentalities and societies. The green revolution was never just about food; it was primarily an effort to change the outlook of the vast majority of Asians who lived off the land. A scientific approach to farming, it was thought, would make peasants have fewer children, change their attitude toward the government, and stop shooting at American helicopters. Rural development was always put forward–as it still is today–as a form of psychological warfare; the campaign against hunger was a battle in the cold war.

American foreign policy has, at least for the last 60 years, identified rurality as disconnected and threatening. “Underdeveloped” meant rural. The peasant or nomad, with his artisanal knowledge and dependence on sun and rain, was the natural enemy of modernity. For this reason, it’s been hard for us to envision what a “modern” agriculture might look like, and we tend to think of improvement either in terms of replacing rural values or institutions, or through inapt analogies: the farm as a factory or the farmer as a businessman. For much of the cold war, the aim of development policy was to evacuate the countryside and to strip the remaining peasants of their traditional ways. Pentagon strategists today see the predominantly rural “ozone hole” around the equator as the locus of future wars. Poverty, too, is seen as a problem that is spatially distant, even though statistics now show that only a quarter of the world’s poor live in poor countries. This rural/urban binary cripples clear thinking about hunger and the disturbances that give rise to it. The olive tree, the Afghan valley, and the African village are not disconnected from globalization. They are just more sensitive to its failures.

Interview conducted by Emma Fursland and Michael Lucivero.